Over the years, I have relied majorly on customer reviews in making a purchase decision about a product or service I found online. As a result of this, I’ve learned so much from the dynamics that surround these reviews and how they ultimately affect the purchase intents of thousands of customers.

Let’s get practical, would you purchase a product or service with 50 negative reviews, 20 neutral reviews and 70 positive reviews?

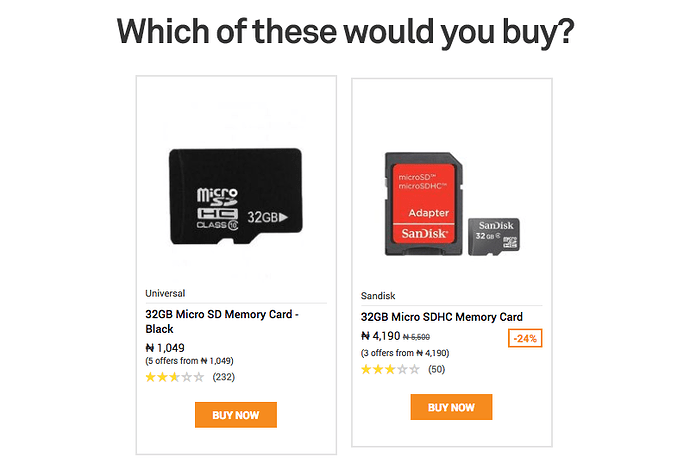

This was my dilemma last weekend as I embarked on a mission to buy a 32GB memory card for my lovely Infinix device.

For an informed consumer that is well aware of the market price of a 32GB memory card, the quality of the one on the left at N1,049 remains largely doubted.

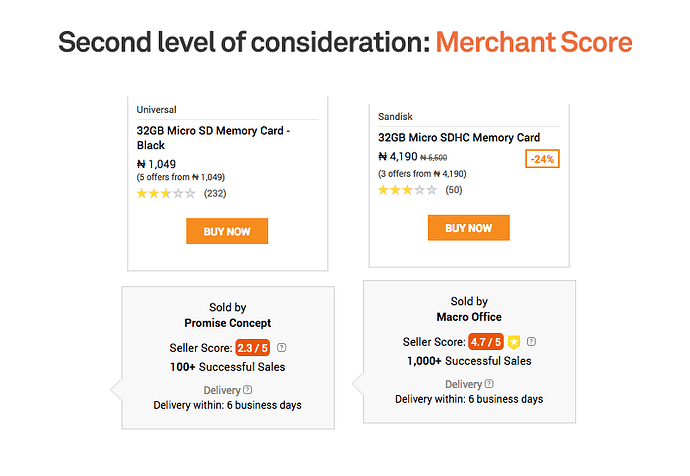

In my case, I decided to explore further by comparing the metrics of the merchants to have an high level overview of how credible they are. Konga does this better by providing more information about the individual metrics that form the merchants score. Usually, you will see scores for PRODUCT QUALITY, DELIVERY RATE and COMMUNICATION. These metrics formed a decision base for me when I started out shopping fashion on Konga, making it easier for my favourite merchants to cross-sell to me whenever they have new stocks.

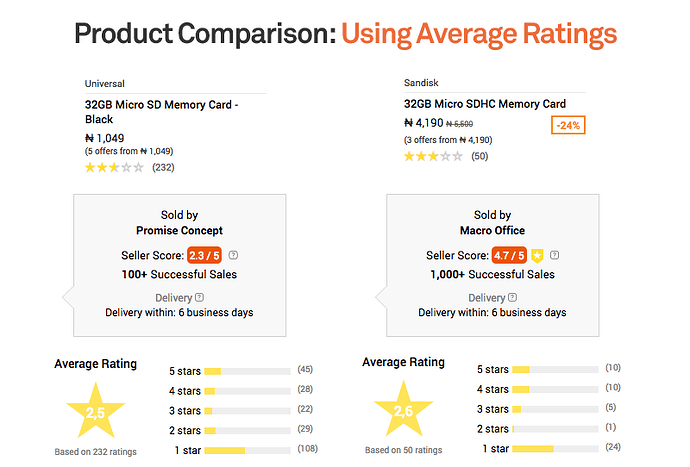

But there still exists an issue of concern, as the memory card is just a subset of the whole merchant store, making it imperative to direct purchase intent towards product reviews, as against merchant total score. A more shocking revelation is that reviews do not always help refine one’s purchase intent. I am of the opinion that negative reviews do not cancel out positive reviews.

While a store might receive a 5 star rating for prompt delivery of a product a consumer ordered, such review does not cancel out a 1 star negative review of a user complaining that the product did not meet up to expectation. 3 star reviews are not neutral reviews, since they are mixed in terms of the sentiment that’s being expressed. They express both positive and negative aspects, while in the real sense, the true neutral reviews should be those of “I don’t have an opinion being expressed”.

This then makes me ask the following questions: Should review volumes matter? Should the venue matter? If taking average is not appropriate, what then should be the appropriate baseline?

Should Volumes Matter?

If the argument is the more sales you have, the more potential exposure of ratings and reviews you can have with those individuals. And if you expect that your marketing activity or customer loyalty is going to drive that volume, and you’re using volume as a gauge for how concerned your customers are with your product. Well, that’s a reason to have a focus on volume. But if it’s just to say well, we’ve got this many sales, now we’re just using these as vanity metrics and they don’t really convey any insight. To put this in a better context, below is a funnel that breaks it down:

Should Venue Matter?

Schweidel and Moe (2014) in their Journal of Marketing Research, explain how venue format choice affects sentiment. I quite agree with what they found. The summary is this: negative comments have highest volume on Discussion Forums > Blogs > MicroBlogging and positive comments have highest volume on MicroBlogging > Blogs > Discussion Forums.

What this means is this: If Wizkid tweets “My new album Sounds from the other side is now out”, and posts same message on a Blog e.g Naijaloaded and also on a discussion forum like Nairaland. This study tells us that if volume of comments remains constant across all venue choices, the most positive sentiments will come from Twitter > Naijaloaded > Nairaland and the most negative comments will come from Nairaland > Naijaloaded > Twitter.

What then should be an appropriate baseline

One of the key things worthy of note is the trigger behind posting reviews. Whenever we buy a product, we experience that product. We first document our expectation of the product’s experience in our minds, we then go on to talk about that as a post-purchase evaluation. But because I have the experience documented in my mind is not enough reason to express it online. Even after forming that evaluation in my mind, what then triggers me to post?. The opinion that I choose to express is going to be driven by what other buyers of the product or service have posted previously.

In this case, we already have a selection effect, which might lead me to not posting at all because I feel my opinions have already been expressed by everyone else and I don’t have anything new to contribute.

There is also a high possibility of an adjustment effect, where I choose to alter my post or delay it, perhaps I haven’t used the product for up to a month, and feel that based on the reviews already posted, it took such time for the posters to realize what they posted.

Another key thing worthy of consideration is that the reviews posted are going to vary significantly, depending on who’s contributing that opinion. We’ve got the general audience (gbogbo ero) versus the expert reviewers. And if their preferences differ, they’re going to express varying overall opinions of a product or service. When looking at these metrics, it’s very tempting to look at an average number, without being cognizant of the variation that exists across consumers.

How should businesses address opinion science to fix their UX?

If I had the perfect answer to this question, maybe you would be paying to read this article. But since we are now more aware of how opinion science and dynamics work, we can rely less on vanity metrics and start taking action based on insights that matter most to us. I have a few checklist of where we can start looking for answers.

Early vs. Late Reviews

So if our 32GB memory card has 80% of it’s 4.5 ratings around July — December 2016, and majority of negative reviews around Jan — July 2017, is it safe to conclude that our merchant now has a new supplier and is unaware of the quality issues, or that dollar has pushed our merchant into selling substandard products. For a product like hotels.ng, could it be that our favourite hotel has now changed some of it’s key staffs?

Brand vs Merchant vs Product Sentiment

So if our store truly has very good products, and the fail is on Jumia delivery agents that did not deliver on time, or delivered half-filled castor oil when I paid for a full one, is this not the brand impacting negative reviews on the merchant? Or if a merchant is very reliable, but one product with very bad reviews is reducing the overall merchant score, is this not the product impacting negative reviews on the merchant?

Selection Effect : Interface Design Solution

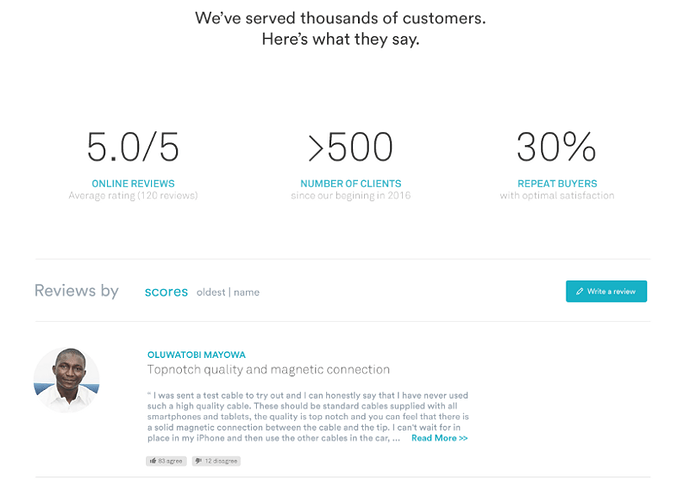

As I explained earlier, selection effect occurs when buyers do not want to post because their opinions have already being expressed. Below is an interface I am currently working on for a reviews page.

Because the product is also B2B, it makes sense to include the percentage of repeat buyers, and also a link to upvote and downvote reviews. More like saying, this is what I wanted to post bro. This also addresses the selection effect I talked about earlier.

The Volume Considerations

Despite having an argument above on whether volume should count or not, the mathematics below can mean a lot when using volume as a criteria for decision making.

Negative Reviews + Lower Volume = Might be Nuisance

Positive Reviews + Lower Volume = A Potential

Negative Reviews + Higher Volume = Red Flags

Positive Reviews + Higher Volume = Viral Success

Kindly post your thoughts, comments and/or additions on how you think opinion science affects UX and possible recommendations on improving the experience for the customers.

Article by: OluwatobiMayowa

Tweets at by: @OluwatobiMayowa