Today, this website caught my attention. Or should I say re-caught my attention, because today was not the first time it did.

WHAT DID I NOTICE?

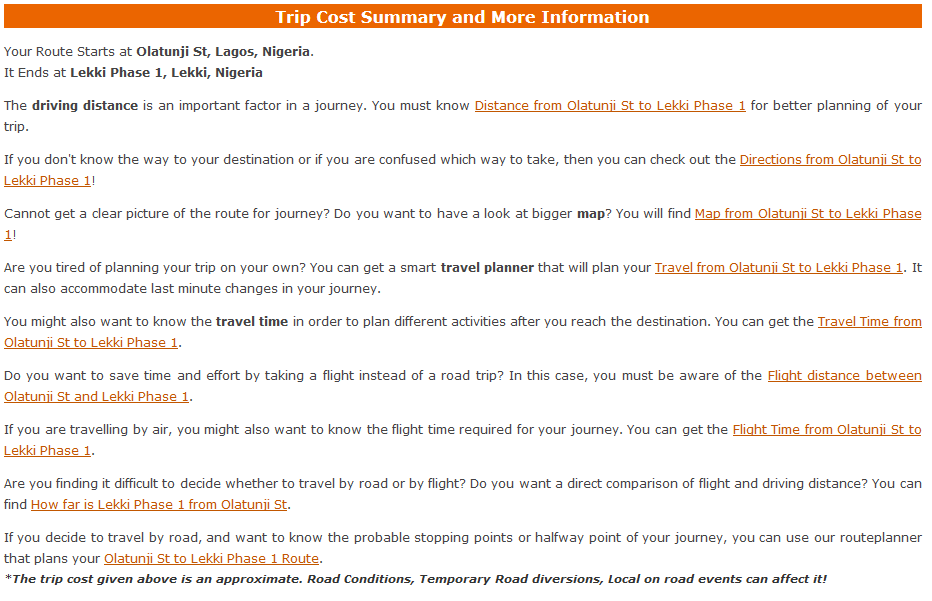

When you Google any phrase of the form: “Location A” to “Location B”, the likelihood that this website would be amongst the first five results is eerily high. On opening a couple of the pages, I realised that the content of the page is total fluff, providing next to no value to the visitor. I’ve settled upon the decision that they’re basically gaming the system using their SEO expertise. You have to agree with me after seeing the following image:

The most interesting detail of this site to me though is in the URLs that the Google search results lead to. They’re all static ASP.NET web pages (.aspx). Example:

MY QUESTION

Hence my question. The lay explanation of how they’ve managed to get their website in Google’s index for several millions of pairs of locations is that they went through the trouble to pre-generate folders and files for as many pairs of locations as they could find and just dump them on a web server.

However, because of how ridiculous and impractical that sounds, especially when you consider the storage implications of that, I would like to think that there’s some advanced SEO + programming technique in play here that I am not aware of and that each of the pages in search results does not actually correspond to a physical file sitting somewhere.

It is interesting to note that the owners of this website also have several other websites build around the same idea of leading people to pages that contain a lot of similar information with the current search terms more or less just filling in placeholders.

Someone, enlighten me please.